Large language models (LLMs) are now used for search, support, analytics, copilots, report drafting, and code assistance. But “it sounds good” is not an evaluation strategy. Data teams need repeatable ways to measure quality, cost, and risk before a model reaches production. Whether you are building an internal assistant or advising learners from a data science course in Bangalore, the same principle applies: define success in measurable terms and test it systematically.

1) Start with the use case and an evaluation plan

Begin with a short “task contract”:

- What is the job? (summarise tickets, generate SQL, classify leads, answer policy questions)

- What inputs can the model see? (documents, databases, tools, user context)

- What output format is required? (JSON, bullets, SQL only, citations)

- What failures are unacceptable? (hallucinated facts, privacy leaks, wrong SQL, toxic language)

Choose two baselines: a simple non-LLM method (rules/templates) and your current best prompt/model. This gives you a realistic yardstick. Then plan three layers of evaluation: offline tests (fast iteration), human review (ground truth), and online monitoring (real impact).

2) Benchmarks that match your data and workflows

Public benchmarks can be useful, but they rarely match your domain vocabulary, compliance needs, or tool use. Build a “gold set” that mirrors real work:

- Representative prompts: include common requests and edge cases.

- Ground truth: store the expected answer, or the required facts and constraints.

- Context packages: if you use retrieval, include the correct sources plus distractors.

For classification tasks, track accuracy, precision, recall, and confusion matrices. For generation tasks, avoid relying on a single number like BLEU. Instead, use task checks: did the answer follow the schema, include required entities, and stay within allowed policies? Also measure operational metrics: latency, token cost, tool-call rate, and failure rate. Teams hiring graduates from a data science course in Bangalore often miss this point: a helpful answer that arrives late or costs too much will struggle to scale.

3) Rubrics for human review and stakeholder alignment

A rubric turns subjective judgment into consistent scoring. Keep it short and tie it to business risk. A practical rubric for many LLM applications includes:

- Correctness: statements are true and consistent with the provided data.

- Completeness: all constraints are met; key steps are not missing.

- Faithfulness: claims are grounded in retrieved documents or permitted sources.

- Clarity and format: output is readable and matches the required structure.

- Safety and compliance: no sensitive data exposure or disallowed advice.

Use a 1–5 scale with brief “anchor” examples so reviewers score similarly. Calibrate by having reviewers grade the same sample set, then discuss disagreements. Track agreement over time; if it collapses, your rubric is too vague or your tasks have shifted.

4) Automated evaluation (and using an LLM as a judge) safely

Automation speeds iteration, but it must be controlled. High-value automated checks include:

- Schema validation for structured outputs (required fields, types, allowed values).

- Unit tests for generated code and SQL (run in a sandbox).

- Groundedness checks (does the answer cite or quote the provided context?).

- Regression suites to detect quality drops after changes.

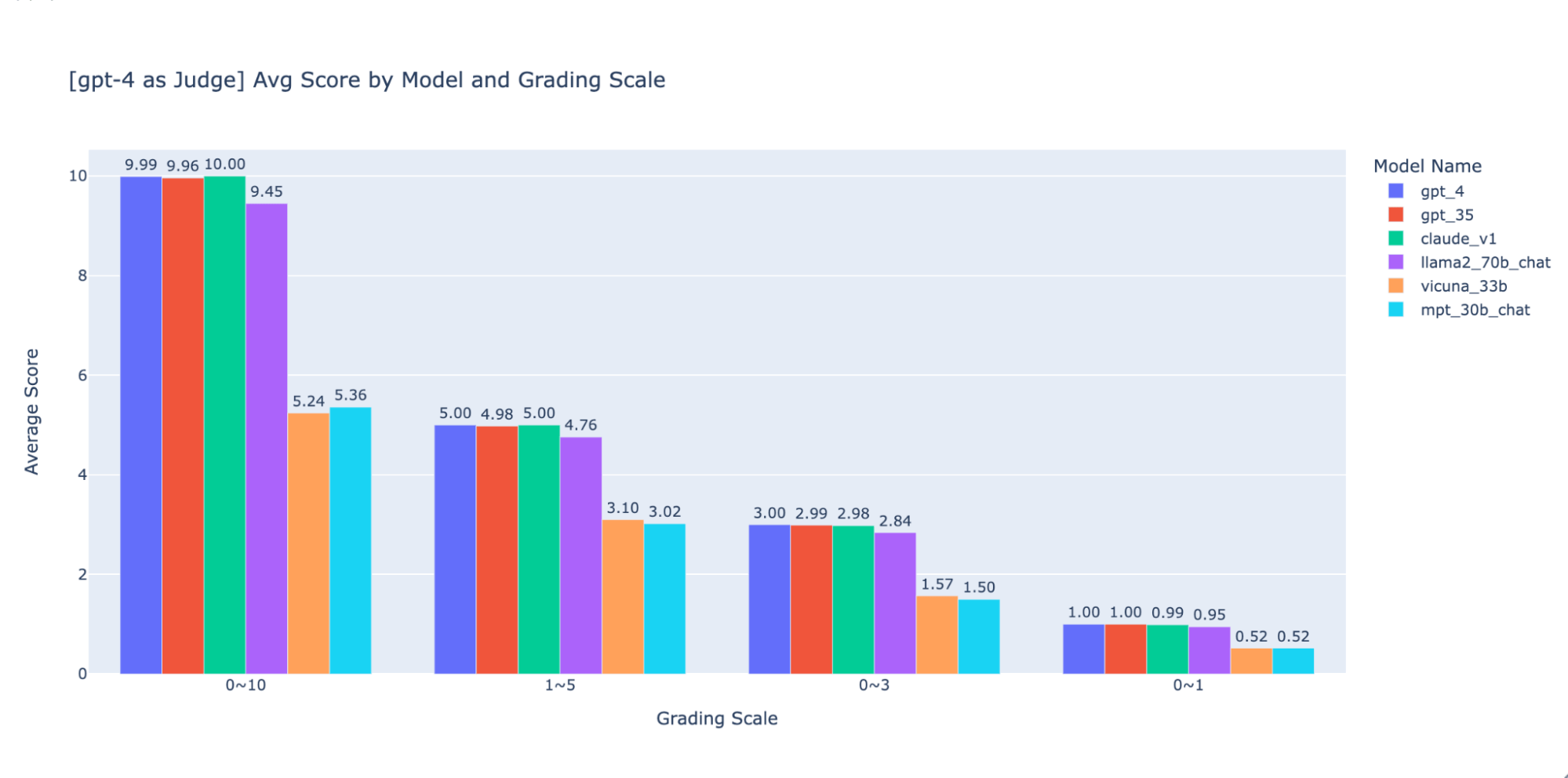

Many teams also use an LLM to score outputs against the rubric. This can help with triage and pairwise comparisons, but it can be biased or overly confident. Reduce risk by fixing the judge prompt, hiding model identities, adding adversarial examples, and routinely spot-checking with humans. Treat it like any other labelling pipeline you would validate in a data science course in Bangalore, capstone: sample, audit, and correct.

5) Real business metrics: the final proof

An LLM can score well offline and still disappoint users. Tie evaluation to business outcomes and validate them with experiments:

- Support: resolution time, deflection rate, escalation rate, CSAT.

- Sales: qualified lead rate, reply rate, conversion, time-to-first-response.

- Analytics copilots: time saved per report, SQL error rate, rework cycles.

Use phased rollouts with guardrails such as fallbacks, approval flows for high-risk actions, and rate limits. Monitor drift: changes in user questions, new product terms, updated policies, and upstream data shifts. Log prompts, retrieved context, outputs, and feedback so you can diagnose failures and refresh your gold set.

Conclusion

LLM evaluation works best as a pipeline: define the task, build realistic benchmarks, apply a rubric with calibrated human review, automate what you can, and confirm impact with business metrics. When data teams treat evaluation as ongoing product work,not a one-time checklist,they ship systems that are safer, cheaper to run, and easier to improve over time, whether for enterprise deployments or learners preparing through a data science course in Bangalore.